Python random forest classifier8/13/2023

Read my article about decision trees to improve your understanding of this area. Random Forests built upon a thorough understanding of Decision Tree Learning. However, the default arguments are often enough to create powerful classification meta-models. To learn about the different arguments of the RandomForestClassifier() constructor, feel free to visit the official documentation. Return the mean accuracy on the given test data and labels. The RandomForestClassifier object has the following methods ( source): apply(X)Īpply trees in the forest to X and return leaf indices.īuild a forest of trees from the training set (X, y). You can make this call deterministic by using the argument random_state.

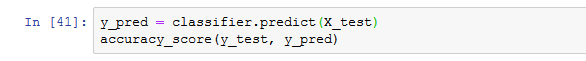

Note that the result is still non-deterministic (which means the result may be different for different executions of the code) because the random forest algorithm relies on the random number generator that returns different numbers at different points in time. Here is the output of the code: # Result & puzzle You can simply achieve this here by creating a multi-dimensional array with one row per observation.

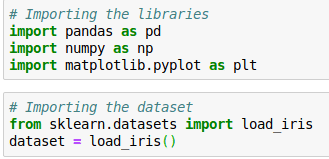

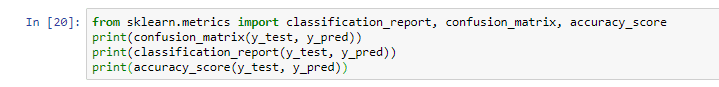

I wanted to show you how to classify multiple observations instead of only one. The classification part is slightly different in this code snippet. Related Tutorial: Introduction to Python Slicing As in the previous examples, we use slicing to extract the respective columns from the data array X. To this end, the input training data consists of all but the last column of array X, while the labels of the training data are defined in the last column. Next, we populate the model that results from the previous initialization (an empty forest) by calling the function fit(). Take a guess: what’s the output of this code snippet?Īfter initializing the labeled training data, the code creates a random forest using the constructor on the class RandomForestClassifier with one parameter n_estimators that defines the number of trees in the forest. X = np.array(,įorest = RandomForestClassifier(n_estimators=10).fit(X, X) # Data: student scores in (math, language, creativity) -> study field But it’s not – thanks to the comprehensive scikit-learn library: # Dependenciesįrom sklearn.ensemble import RandomForestClassifier You may think that implementing an ensemble learning method is complicated in Python. Let’s stick to this example of classifying the study field based on a student’s skill level in three different areas (math, language, creativity). As this is the class with most votes, it is returned as final output for the classification. Two of the decision trees classify Alice as a computer scientist. To classify Alice, each decision tree is queried about Alice’s classification. The “ensemble” consists of three decision trees (building a random forest). In the example, Alice has high maths and language skills. Here is how the prediction works for a trained random forest: This leads to various decision trees – exactly what we want. Similarly, a random forest consists of many decision trees.Įach decision tree is built by injecting randomness in the tree generation procedure during the training phase (e.g. Random forests are a special type of ensemble learning algorithms. This is the final output of your ensemble learning algorithm. Now, you return the class that was returned most often, given your input, as a “meta-prediction”.

To classify a single observation, you ask all models to classify the input independently. In other words, you train multiple models. How does ensemble learning work? You create a meta-classifier consisting of multiple types or instances of basic machine learning algorithms. The simple idea of ensemble learning for classification problems leverages the fact that you often don’t know in advance which machine learning technique works best. However, they are also prone to “ overfitting” the data because of their powerful capacity of memorizing fine-grained patterns of the data. You may already have studied multiple machine learning algorithms-and realized that different algorithms have different strengths.įor example, neural network classifiers can generate excellent results for complex problems.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed